Introducción

We live in a digital, interactive and interconnected world. Advances in sensor and wireless technologies have enabled a large number of artefacts to be interconnected and augmented with information technology. The scenario envisaged by Mark Weiser in the early 90’s (Weiser 1991:86) of “cheap, low-power computers that include equally convenient displays, software for ubiquitous applications and a network that ties them together” is now a reality, primarily fuelled by the success of Radio Frequency Identification (RFID) technology, widely used for tracking objects and people within living contexts. Studies on materials have made technological advancements that have changed the role consumer products have in everyday life (Jung et al. 2011; Kuniavsky 2010; Klooster et al. 2009; Peters 2011) being technology progressively more embedded and pervasive.

Currently, a New Product Design (NPD) challenge seems to be in the design of seamless interactions that do not rely on traditional computer input/output media but that envisage unobtrusive, interconnected, adaptable, distributed and dynamic environments. In other words, rather than using screens and keyboards, people may communicate directly with clothes, furniture and household devices to control, manage and interact with the environment through the artefact (Kuniavsky 2010). Streitz et al. (2005) identified two complementary trends that justify the integration of information, communication, and sensing technologies into everyday objects: the growing miniaturisation of technological devices, small enough to be nearly invisible, and the enhancement of the functionality of everyday objects to support rich interactions and behaviours. Trials in this direction fore-see materials, gradually embedded with sensors and dynamic output signals, to augment their interactivity with the surrounding world. The growing diffusion of computational features in everyday products has surprisingly blurred the boundaries between materials, interactive technologies and human-computer interactions redefining the concept of ‘materiality’ itself; specifically, materials are ‘smart’ (SMs) in the way that “they display smart behaviours”1, which happens when a material can sense a stimulus from its environment -physical and/or chemical influences, e.g. light, temperature or the application of an electric field (Ritter 2007) - and is capable of reacting to it in a useful, reliable, reproducible and usually reversible manner. This engineered, embedded intelligence may change the way designers conceptualise, develop, and apply materials, as it will no longer be just about the physical form of a new product, but about intangible features, such as the way enhanced information is made available and therefore stimulate the user’s ability to decide which action to perform and be aware of the reasons underpinning that action. Streitz et al. (2005:4032) distinguished between a system-oriented artefact, where smart artefacts or the environment can take self-directed actions based on previously collected information, and people-oriented artefacts, able to empower functions in the foreground so that “smart spaces make people smarter”. The definition of people-oriented artefact introduces the concept of objects able to empower the user to take responsible and wise decisions and actions while being always firmly in control of the device. Enhanced with intelligent features, the pervasive, interactive materiality is expected to provide unprecedented interactive possibilities where materials are experienced not just at the sensorial level, but for the cognitive mechanisms and action-reactions responses they trigger (Micocci et al. 32016). The product interface, considered as the part of the product that allows a dialogue between the product and its users (Krippendorff 2005), is then embedded into the product and the new intelligent materiality is its vehicle of information.

Intelligent materiality and immediate interactions

Research areas such as Pervasive and Ubiquitous Computing (Weiser 1991), Internet of Things (Atzori et al. 2010) and Ambient Intelligence (Ducatel et al. 2001), foresee the environments of the future as intelligent in a way that it can unobtrusively understand the users’ behaviour, predict the users’ wishes and needs and act accordingly. A large body of research has investigated how objects can be aware of their surroundings and memorize real-world events (Sterling 2005), how they can exchange information about themselves with other artefacts and computer applications (Holmquist et al. 2001), how they can take self-directed actions based on previously collected information and how they can empower users to make decisions by taking informed and context-specific actions (Streitz et al. 2005). What is potentially successful in these examples is the importance to minimize the cognitive distance between a task performed by the user and the execution of that task (Jacob et al. 2008); this vision is theorized as a distance between our internal goals on one side and the expectations and the availability of information specifying the state of the world (or an artefact) on the other side (Norman 1988); these two statuses are called gulf of execution and gulf of evaluation respectively. Artefacts, to really be smart, should let the user focus on the activity the artifacts are designed to support and not on the technology embedded or the sequence of steps required to achieve the desirable outcome; in other words, the breadth of the two gulfs could be shortened and the product made so immediate that it becomes invisible (Norman 1998). Through this lens, a challenge in the redefining a new intelligent materiality is to bypass additional devices such as mice, keyboards and screens that mediate the relation between the user and the product and support a seamless acquisition of information.

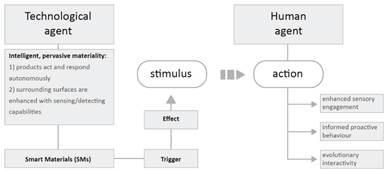

Departing from most of the current literature on applied cases of SMs into smart artefacts, the overarching goal of this paper is that of presenting a scenario for redefining materiality within a ‘stimulus - action’ system where smart interactivity between the users and the products in which SMs are embedded takes place. Within the context of this paper, the term interactivity - relating to the interplay between both people and objects (Rammert 2008) - is meant to indicate the collaboration of a human agent (the user) with one or more technological agents (the product in which SMs are embedded) and differs from the interaction, which takes place exclusively between human agents. The aim of this approach is to enhance the experience of the user by making the dialogue with the product as natural and as immediate as possible. The potential benefit of such work can be to make new products and smart experience accessible to a wider inclusive user’s audience.

Smart Materials as technological agents

SMs, in the last decades have been classified mainly for their embedded physical properties (piezoelectric materials, electro-rheological fluid, etc.) with specific attention to their ability to work as sensors, actuators, to change properties, and exchange energy or matter (Gandhi et al. 1992, Banks et al. 1996, Culshaw et al. 1997, Srinivasan, McFarland 2001, Addington, et al. 2005). These underlying material properties have long been known but only recently they have been applied for smart applications. Recent studies magnify the potential of SMs in the way they can also be used as interfaces (Minuto et al. 2012; Vyas et al. 2012) and a large body of research has been published on the application of materials whose dynamic properties are exploited to provide originality in users’ experiences and new interaction possibilities (Ferrara et al. 2014; Ritter 2007).

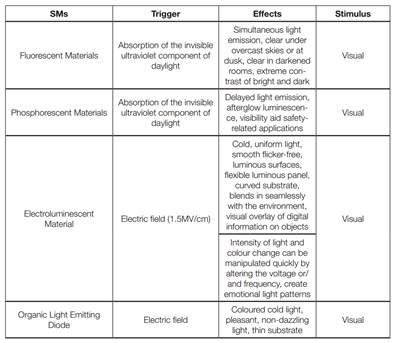

Four families of SMs are identified for the potential to convey an enhanced sensorial stimulus in a product design context and being able to create significant sensorial changes in both visual and haptic stimuli domain:

Light Emitting Materials: this family includes those energy-exchanging materials that produce light when their molecules are excited by the effect of energy, e.g. the effects of light or an electrical field (Ritter 2007). Within this family are found Fluorescent and Phosphorescent materials, Electroluminescent Materials (EL), Light Emitting Diode (LED) and Organic Light Emitting Diode (OLED);

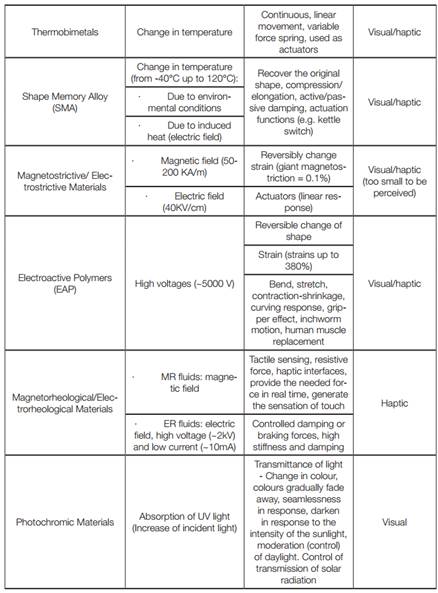

Shape Changing Materials: including property-changing Smart Materials able to reversibly change their shape and/or dimensions in response to one or more stimuli (Ritter 2007). Shape Memory Alloy (SMA) and Shape Memory Polymers (SMP) are mainly known for their properties to remember and recover from large strains to a previously memorised shape without permanent deformation, Thermobimetals (TB) are laminated composite materials that consist of at least two components, usually bands or strips, made from metals with different thermal expansion coefficients, Magnetostrictive materials are materials that change their shape (strain) and volume when subjected to a magnetic field, Electroactive polymers (EAP), known as ‘artificial muscles’ (Bar-Cohen 2000), are artificial actuators that emulate the behaviour of human muscles, exhibiting a large strain in response to electrical stimulation.

Rheological Changing Materials: Rheological fluids are those liquids whose viscosity properties can be modified by an external stimulus (Lozada et al. 2010). This stimulus can be a magnetic or electric field (Magnetorheological and Electrorheological materials respectively) and, in both cases, the external field acts on the micron-sized particles in suspension into the carrier fluid.

Colour Changing Materials: with this technically called Chromogenic Smart Materials, a change in an external stimulus, such as light (Photochromic), temperature (Thermochro-mic), mechanical stress (Mechanochromic), electric field (Electrochromic) and chemical environment (Chemochromic), produces a change of the absorption of reflectance of its optical properties (Papaefthimiou 2010).

Despite the high differentiation of the engineered properties of SMs, two main potentialities are identified that characterise materials as technological agents by means of actuators of a dynamic stimulus:

1. Creating product that can act and respond autonomously: Doris Kim Sung investigates the potentialities of Thermobimetals to build kinetic architecture that autonomously reacts to changing environment temperature to self-regulate buildings; changing colour sensors can be used as food label for smart packaging that can sense the physical and chemical parameters of the products and then communicate its quality, safety, shelf-life and usability (Kuswandi et al. 2011); Surflex (Coelho et al. 2008) combines the physical properties of Shape-Memory Alloy and foam to create a surface that can be electronically controlled to deform and gain new shapes; Shape Shift is a dynamic wall designed by Manuel Kretzer that uses Electro-Active Polymers as ultra-lightweight, flexible material with the ability to electrically change shape without the need for mechanical actuators.

2. Enhancing every surrounding surface with sensing/detecting capabilities: Future Care Floor (Klack et al. 2011) shows an integration of the SMs materials into the home environment; this sensor floor seamless integrates piezoelectric sensors to support old and frail persons living independently at home to detect abnormal behavioural patterns of the inhabitant and activate rescue procedures in case of falls or other emergency events. Keng-hao Chang et al. (2006) designed a dining table that tracks table-top interactions such as transferring food among containers and monitoring the eating of food by weighing sensors and an RFID surface embedded into the surface of the table. The aim is to support people to track the amount of food shared and consumed for diet management. Furthermore, common materials (such as paper or fabric) can also be augmented with expressive an interaction potential, for example through Shape-Memory Alloy wires (Qi et al. 2012; Saul et al. 2010) or Thermochromic and Conductive ink (Kaihou et al. 2013; Berglin 2005).

The stimuli observed are mainly visual and haptic with a high degree of personalisation. Table 1 (Appendix A) extends and summarises the SMs identified with their trigger-effect system and the sensorial stimuli produced. The review unveils the role of materiality with embedded smart features to afford and support a dynamic interactivity with the user. Benefits from this approach are not only add-on interfaces in product development but integral constituents of the product. It is instrumental therefore to understand the action possibilities in the continuous agency they perform in everyday tasks. This helps to conceptualise the sensorial and interactive qualities that can become the ‘language’ of communication between the users and the intelligent and pervasive materiality in which materials are invested with intelligence when interacting with the user.

Action possibilities for the human agent

Starting from a primary sensorial reaction toward an articulated user cognitive response, the first action possibilities of materials as technological agents are that of enhancing a multisensory engagement of the user. Embedded intelligence is expected to amplify the information delivered through different sensorial channels that take advantage of the hearing, sight, and touch of the user. Electrorheological fluids can open the possibility of producing a façade cladding that changes properties such as transparency, reflectivity, colour and even shapes through a computer-controlled system that enables the creation of pulsating animations, running images and dark sparkling pictures4. Furniture can change in optical properties revealing the shape of our body like Linger a Littel Longer5 by Jay

Watson (Fig. 1) and Voxel6 by SimbiosisO for an enhanced sensorial experience. Jason Bruges Studio’s ‘Mimosa’ (Fig. 2), commissioned by Philips Lumiblade, is an interactive artwork that mimics responsive plant systems. As mimosa plants changes to suit their environmental conditions, individual slim OLEDs are used to create delicate light petals, forming flowers that open and close in response to human’s body movement. Jason Bruges also designed The Nature Trail (Fig. 3) which has turned the corridor route from ward to surgery into a sensorial experience to distract children from what awaits. The installation is made of the integration of LED glowing animals behind a forest-like graphic wallpaper.7

This enhanced multisensory engagement that pervasive, intelligent materiality may eventually create, has the potential to encourage the user towards an informed and proactive behaviour9by delivering an unmediated information. Radiate Athletics (Fig. 4) is a training garment that changes colour based on the heat released by the user body, showing which muscles are burning calories. Radiate Athletics uses Thermochromic inks and dyes that change colour as the temperature changes, revealing the current level of user performance in terms of output of heat. This progressive detection and communication of changing body’s parameter create a narrative that stimulates the user to perform a focused sports activity. The Creativi*tea Kettle by Sarina Fiero10 (Fig. 5) changes its colour as the water heats within. The teapot, coated with thermochromic dye, keeps its user informed and alert during the entire course of water boiling by turning increasingly more luminous red. Blade of grass made by Shape-Memory Alloy can be electrically controlled to communicate information to users through their appearance and movement capabilities that make them particularly well suited for giving directions in indoor environments, or for ambient persuasive guidance and entertainment (Minuto, Nijholt 2012).

The core potential observed is when materials consider the evolutionary nature of the human agent and then support him with personalized, changeable and emotionally deep experiences. A product that cope with this issue is Ref11 (Fig. 6) a wearable device that supports users improving and training their emotional skills. By monitoring changes in the user pulses, this haptic device, which straps onto the user’s wrist, twists, curls, and nuzzles against his skin to soothe, relaxes, or enhances the user behaviour; the aim is to provide a self-help tool that can also coach the user in practising a mind balancing breathing pattern by showing him an intuitive body language. Aura (Righetto et al. 2012) is a set of garments to enable non-verbal communication between expectant parents. A garment for an expectant mother is enhanced of piezoelectric sensors to detect foetus movement; signals are translated into a ‘light message’ for the mother-to-be and sent via WiFi to a bracelet for her partner. The bracelet converts the signal into a ‘haptic message’. The simultaneous, participatory, deep and extremely personal connection of both partners is enhanced by the messages transmitted by the devices.

Discussion

The three action possibilities identified lead the process of application of embedded intelligence towards what is defined a ‘smart interactivity’ (Fig. 7), defined by a stimulus - action system.

Based on this system, a research conducted at Brunel University London, partially sup-ported by the EU-funded FP collaborative research project Light.Touch. Matters (LTM), under agreement n°310312, applies the four SMs families identified for their potentiality to physically embody non-linguistic analogies and metaphors, through a combination of visual and haptic feedback, to help users retrieve their past knowledge and seamlessly acquire new information (Micocci et al. 2018). Under the umbrella of ageing studies, the research demonstrated how the adoption of SMs in a non-linguistic metaphors setting may significantly facilitate older and younger adults to link the past and new knowledge with the intent to intuitively interact with products they had limited prior exposure with and shorten the generation gap when understanding novel technologies. In other words, smart interactivity paves the way to maximise the exchange of information between human and the environment that the human agent will eventually convert into volitional and informed actions. However, further studies are recommended to unveil the action possibilities we may achieve with and to further test and evaluate new smart and engineered materials to enhance, at different levels of interventions, an informed cognitive response of the user.

Conclusion

The process of conceptualising and designing Smart Interactivity considers materials as a technological agent with their engineered features and dynamic stimulus, and the action possibilities of the human agent. The results of this review suggest that intelligent and pervasive materiality could promote multi-sensory engagement of the user and could help in the process of information exchange between human-artefact. Through this design approach, SMs can be employed to reduce the cognitive distances in evaluation and executions, which unclear interfaces at times create.